You’ve spent three hours recording a voiceover. The audio is decent — but your microphone picked up the AC unit, your “s” sounds are a little too sharp, and now you’re staring at Audacity wondering if this is really how professionals do it.

There’s a quieter option that’s been picking up serious traction among content creators and development teams: AI voice generation. Specifically, a platform called Noiz AI — a text-to-speech, voice cloning, and emotional voice design tool that promises to turn your script into broadcast-ready audio in under three seconds.

I dug into everything Noiz AI does, tested it across real use cases, and compared it honestly against the alternatives. Here’s what actually matters — and what the marketing glosses over.

What Is Noiz AI?

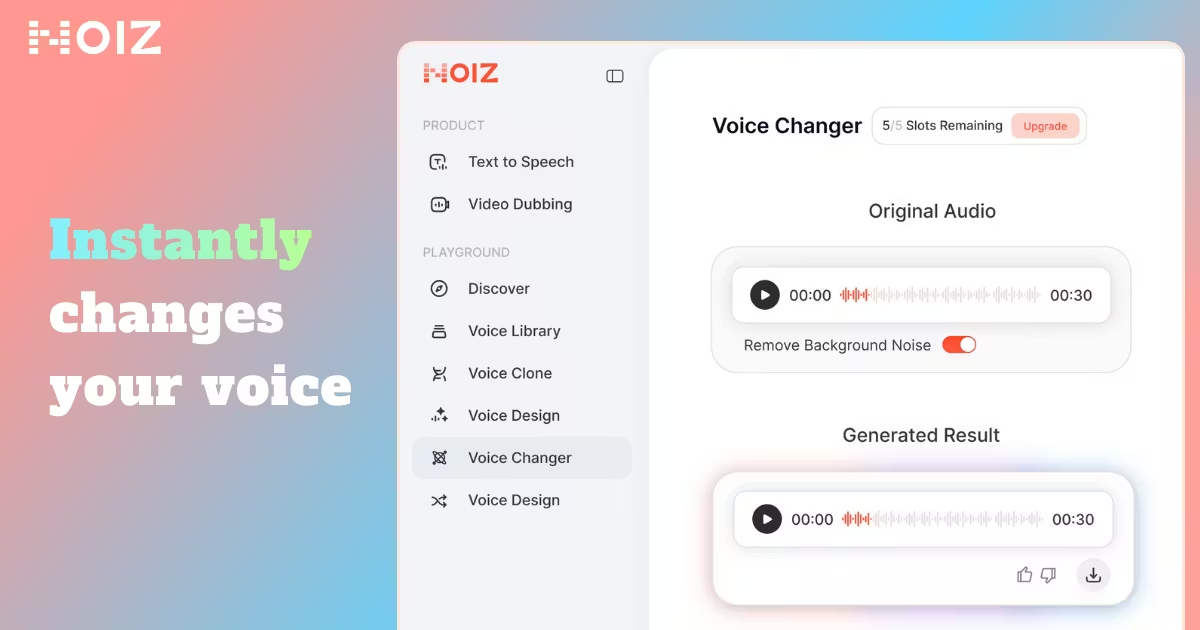

Noiz AI is an AI-powered voice generation platform that does three things: text-to-speech (TTS), voice cloning, and video dubbing. You type your script, choose a voice, dial in the emotional tone, and get clean audio back in roughly one to three seconds.

What makes it different from a basic TTS engine is the emotional layer. Most tools just read your text. Noiz AI tries to feel it — adding natural pacing, breath sounds, and tone shifts that make the output sound less like a robot and more like an actual human narrator.

The platform is built on a large-scale proprietary speech model trained on millions of hours of human speech. It currently serves over 800,000 users globally, from solo YouTubers to SaaS teams running localization pipelines across 15 markets.

Who Is It Actually For?

Noiz AI isn’t a one-size-fits-all tool. It genuinely shines for specific use cases:

Content creators and YouTubers who need consistent, high-quality voiceovers across a large volume of videos — without hiring a voice actor for every upload.

E-learning developers and educators building course content who want studio-quality narration without a studio. An educator recording lessons with background noise and inconsistent audio quality can rebuild their entire audio library with a single cloned voice.

Podcast producers who want to launch without microphone equipment or bring in multiple “voices” for a more dynamic show format.

SaaS companies and startups needing to localize their onboarding videos, product demos, or tutorials across multiple languages fast — without paying $2,000+ per language for traditional dubbing.

App developers and engineers who need a reliable voice API for features like audiobook playback, meditation guidance, virtual assistants, or in-app narration.

TikTok and short-form creators who want expressive, stylized voiceovers that actually match the emotional pace of fast-cut content.

If you need one voice, occasionally, for a simple task — the free tier works. If you’re building a workflow around voice content at scale, the paid plans start to make real financial sense.

Key Features

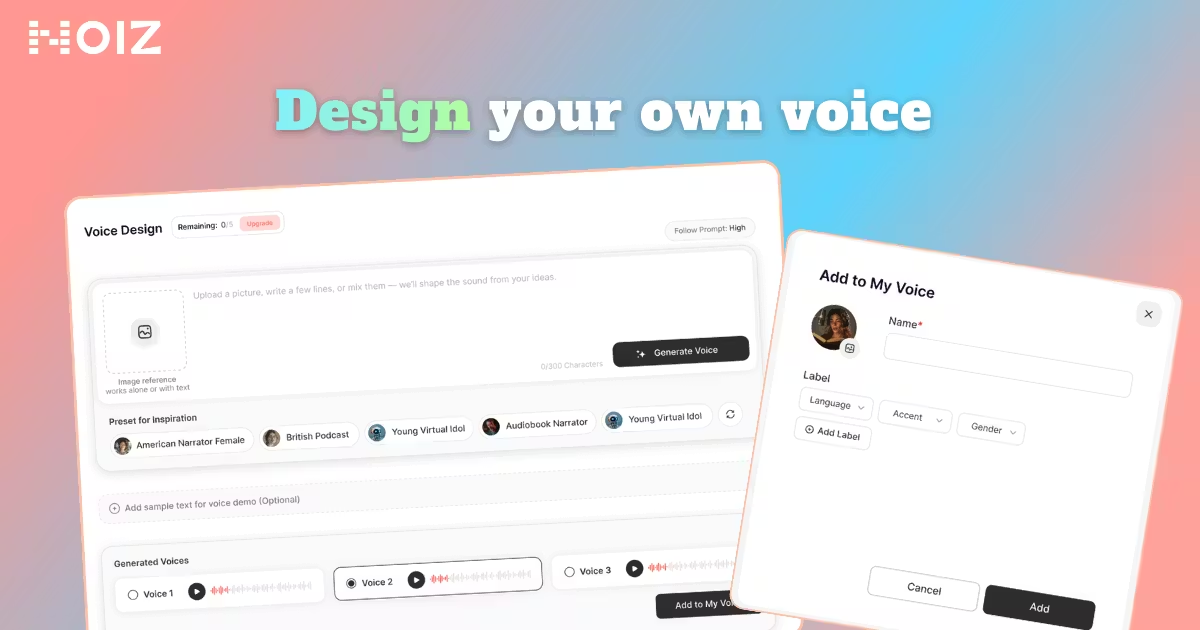

Emotional Text-to-Speech

This is Noiz AI’s signature capability. Rather than delivering flat, monotone audio, the emotional TTS engine lets you select moods — happy, sad, curious, angry, excited, calm — and the voice adjusts its delivery accordingly.

Why does this matter? Because the difference between a voice that reads and one that connects is what keeps listeners engaged past the first 30 seconds. A meditation app narrated in a clinical tone is just noise. The same script delivered with the right pacing and warmth is a product.

Noiz AI handles tone, pace, pauses, and even subtle breath sounds as part of its output — not as a post-processing step you have to add yourself.

Voice Cloning

Upload three to ten seconds of audio from a voice you have permission to use, and Noiz AI produces a digital replica of that voice — available instantly, with no processing queue.

The consent-based cloning model matters here. The platform builds permission workflows into the cloning process, which protects both users and the people whose voices are being replicated. This is becoming non-negotiable as AI voice regulations tighten globally.

Multilingual Dubbing and Video Translation

Drop in a video. Select a target language. Noiz AI translates the audio while preserving the original timing and delivery style — so the dubbed version doesn’t feel like a dubbed version.

This is the feature that saves localization teams the most money. Traditional dubbing for 15 languages at $2,000+ per language adds up fast. Noiz AI’s dubbing pipeline brings that down significantly while keeping the result authentic.

Vast Voice Library

150+ voice options across styles, ages, accents, and tones. Whether you need a warm female narrator for a wellness app, a deep authoritative voice for a documentary, or a playful character voice for an animated explainer — there’s a starting point here.

The library covers 140 languages, making it genuinely useful for global content strategies.

Developer API

For teams building voice into products, Noiz AI offers a straightforward REST API with roughly one to three seconds of generation latency. That’s fast enough for near-real-time application features and well within tolerance for batch content production pipelines.

SDKs and API documentation are built for production — not just demos — which matters when you’re integrating into e-learning platforms, audiobook apps, or voice assistants.

How It Compares to Alternatives

Noiz AI vs ElevenLabs

ElevenLabs is the benchmark for raw voice realism. Its cloning accuracy is exceptional, and its voice library is massive — over 5,000 voices across 70+ languages. If you’re producing audiobooks where every sentence needs to sound like a professional narrator, ElevenLabs has a strong edge.

Where Noiz AI pulls ahead: emotional range, dubbing workflow, and price. ElevenLabs plans run $5–$99/month depending on tier. Noiz AI’s Starter plan is under $4/month. For creators who need the full pipeline — TTS, cloning, and dubbing — in one place without paying for three different tools, Noiz AI is more practical.

Noiz AI vs Murf AI

Murf AI is a solid option for corporate training, marketing videos, and presentations. Its interface is clean, editing is intuitive, and team collaboration features are built in. But Murf doesn’t have the same emotional depth on voice output, and its dubbing capabilities aren’t as developed.

Noiz AI is the better pick when emotional nuance and multilingual video work are priorities. Murf wins for teams who primarily need simple, polished voiceover in English with good production controls.

My Hands-On Experience

I ran Noiz AI through a few realistic scenarios over a week of testing.

Scenario 1 — Educational content narration: I fed it a 600-word explainer script and cycled through a few different emotional tones. The “teaching” delivery — calm, measured, with natural pauses — held up really well. It didn’t sound like an AI reading. It sounded like someone who understood the content.

Scenario 2 — Voice cloning: I uploaded a clean 8-second voice sample and cloned it. The output was impressively close — same cadence, same subtle warmth in the original. For brand consistency across a content series, this would work.

Scenario 3 — Video dubbing: I tested a short 2-minute English explainer video, dubbing it into Spanish. Timing held. Emotional delivery translated. The result wasn’t flawless — there were a couple of spots where the dubbed audio felt slightly rushed — but it was significantly better than I expected for a one-click operation.

| Verdict | |

|---|---|

| ✅ Voice realism | Strong, especially with emotional settings |

| ✅ Cloning speed | Instant — genuinely no wait time |

| ✅ Dubbing quality | Surprisingly natural for automated output |

| ✅ Pricing | Aggressive — cheaper than most comparable tools |

| ⚠️ Voice library depth | 150+ voices is good, not ElevenLabs-level |

| ⚠️ Dubbing edge cases | Long, complex videos can have minor timing slips |

| ❌ Offline access | Cloud-only — no desktop app currently |

Common Misconceptions

“AI voice cloning is illegal or unethical.”

Not inherently. The legal and ethical question is about consent. Noiz AI’s model requires that you clone voices you have permission to use — whether that’s your own voice, a voice actor who’s authorized the use, or a licensed voice. Cloning public figures without permission, or using cloned voices to deceive or defame — that’s where ethical and legal issues arise. Used responsibly, voice cloning is a legitimate production tool.

“You need a long audio sample to clone a voice.”

Noiz AI works from as little as 3–10 seconds of audio. You don’t need a studio session or a full recording of someone reading a passage. A short, clean clip is enough to generate a working digital voice replica.

“Emotional AI voices still sound robotic.”

This was true two years ago. The gap has closed significantly. Noiz AI’s output, especially with emotional settings enabled, consistently surprises first-time users who expect that hollow, synthetic quality. The breath sounds and natural pacing are what make the difference — and both are built into the model’s output by default.

Pro Tips & Hidden Tricks

1. Use short, punchy sentences in your script. The TTS engine handles sentence rhythm better when you write the way you’d naturally speak. Long, compound sentences can produce awkward pauses or unnatural emphasis.

2. Test multiple emotional settings on the same paragraph. “Curious” and “calm” often produce noticeably different results on identical text. Spend two minutes cycling through options before committing — the variation is wider than you’d expect.

3. For video dubbing, clean your source audio first. Background noise in the original video affects how well the translation preserves timing. A clean audio track going in means a cleaner dub coming out.

4. Take the free daily bonus credits seriously. Paid plans include 2,000 daily bonus credits on top of the monthly allocation. If you’re a daily user, these stack up fast and effectively extend your usable quota.

5. For long-form content, break scripts into sections. Generating full chapters as a single input can occasionally produce pacing inconsistencies near the end. Splitting into 300–500 word chunks and reviewing each section gives you more control over the final edit.

Pricing — Is It Worth It?

Noiz AI uses a credit-based system across three tiers:

Free Plan — A starting credit allocation with limited generation speed. Good for testing and occasional personal use. Exports include a watermark.

Starter (~$3.90/month) — 150,000 credits/month plus 2,000 daily bonus credits. Priority generation speed, unlimited voice cloning, and watermark-free exports. For solo creators publishing regularly, this tier is genuinely enough.

Creator (~$11.90/month) — 500,000 credits/month with the same daily bonus, priority speed, unlimited cloning, and watermark-free output. Built for high-volume workflows — content teams, agencies, or developers running production pipelines.

Compare this to hiring voice actors ($500–$2,000 per project) or ElevenLabs ($5–$99/month for comparable features). Noiz AI’s pricing is aggressive. The Starter plan in particular is hard to beat for what it includes.

Who gets genuine value from paid plans: Anyone producing more than a few pieces of audio content per month. The watermark-free exports alone justify the Starter price if you’re publishing professionally.

Who should stay on free: Casual experimenters, students testing the technology, or developers evaluating the API before committing to integration.

Final Verdict

Noiz AI is one of the most complete AI voice generation platforms available right now — and it’s priced in a way that makes it accessible to creators who aren’t running enterprise budgets.

The emotional TTS is the genuine differentiator. If your output needs to feel like something — a course that teaches, a meditation that calms, a story that pulls the listener in — the emotional controls give you a level of nuance that flat TTS tools can’t match.

Voice cloning is fast and accurate. Multilingual dubbing is the most impressive single feature for teams expanding globally.

You should use Noiz AI if: You’re a content creator, educator, podcaster, or developer who needs professional-grade voice audio at scale without the cost and logistics of traditional voice production.

You might look elsewhere if: Your primary need is audiobook-quality narration where every syllable counts and you have the budget for ElevenLabs — or if you need real-time, ultra-low-latency speech for live interactive applications where Deepgram may be more appropriate.

For most people building a real voice content workflow in 2026, Noiz AI is the smartest starting point.

Visit Site — Noiz